Author an AI agent and deploy it on Databricks Apps

Build an AI agent and deploy it using Databricks Apps. Databricks Apps gives you full control over the agent code, server configuration, and deployment workflow. This approach is ideal when you need custom server behavior, git-based versioning, or local IDE development.

If your agent uses only Databricks-hosted tools and does not need custom logic between tool calls, you can use the Supervisor API (Beta) to let Databricks manage the agent loop for you.

Every conversational agent template includes a built-in chat UI (shown above) with no additional setup required. The chat UI supports streaming responses, markdown rendering, Databricks authentication, and optional persistent chat history.

Requirements

Enable Databricks Apps in your workspace. See Set up your Databricks Apps workspace and development environment.

Step 1. Clone the agent app template

Get started by using a pre-built agent template from the Databricks app templates repository.

This tutorial uses the agent-openai-agents-sdk template, which includes:

- An agent created using OpenAI Agent SDK

- Starter code for an agent application with a conversational REST API and an interactive chat UI

- Code to evaluate the agent using MLflow

Choose one of the following paths to set up the template:

- Workspace UI

- Clone from GitHub

Install the app template using the Workspace UI. This installs the app and deploys it to a compute resource in your workspace. You can then sync the application files to your local environment for further development.

-

In your Databricks workspace, click + New > App.

-

Click Agents > Custom Agent (OpenAI SDK).

-

Create a new MLflow experiment with the name

openai-agents-templateand complete the rest of the set up to install the template. -

After you create the app, click the app URL to open the chat UI.

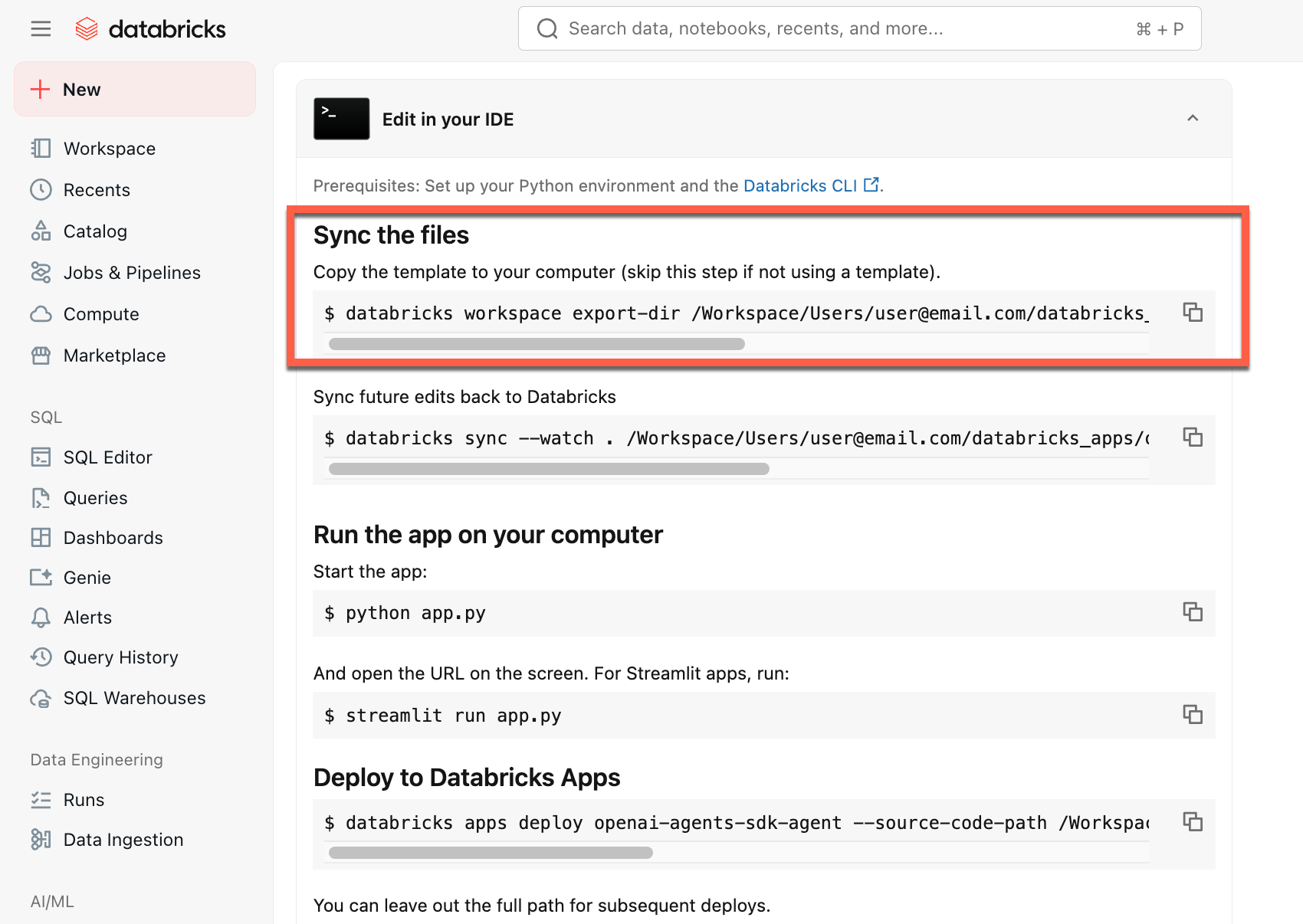

After you create the app, download the source code to your local machine to customize it:

-

Copy the first command under Sync the files

-

In a local terminal, run the copied command.

To start from a local environment, clone the agent template repository and open the agent-openai-agents-sdk directory:

git clone https://github.com/databricks/app-templates.git

cd app-templates/agent-openai-agents-sdk

Step 2. Understand the agent application

The agent template demonstrates a production-ready architecture with these key components. Open the following sections for more details about each component:

Open the following sections for more details about each component:

Built-in chat UI

Built-in chat UI

The agent template automatically fetches and runs the chat app template as its frontend. This chat UI is bundled into the same Databricks Apps deployment and served alongside your agent, so there is no additional setup required.

You can customize the chat UI directly in your project. For more details on the chat app's features, including how to enable persistent chat history and user feedback collection, see Build and share a chat UI with Databricks Apps.

MLflow AgentServer

MLflow AgentServer

An async FastAPI server that handles agent requests with built-in tracing and observability. The AgentServer provides the /responses endpoint for querying your agent and automatically manages request routing, logging, and error handling.

ResponsesAgent interface

ResponsesAgent interfaceDatabricks recommends MLflow ResponsesAgent to build agents. ResponsesAgent lets you build agents with any third-party framework, then integrate it with Databricks AI features for robust logging, tracing, evaluation, deployment, and monitoring capabilities.

To learn how to create a ResponsesAgent, see the examples in MLflow documentation - ResponsesAgent for Model Serving.

ResponsesAgent provides the following benefits:

-

Advanced agent capabilities

- Multi-agent support

- Streaming output: Stream the output in smaller chunks.

- Comprehensive tool-calling message history: Return multiple messages, including intermediate tool-calling messages, for improved quality and conversation management.

- Tool-calling confirmation support

- Long-running tool support

-

Streamlined development, deployment, and monitoring

- Author agents using any framework: Wrap any existing agent using the

ResponsesAgentinterface to get out-of-the-box compatibility with AI Playground, Agent Evaluation, and Agent Monitoring. - Typed authoring interfaces: Write agent code using typed Python classes, benefiting from IDE and notebook autocomplete.

- Automatic tracing: MLflow automatically aggregates streamed responses in traces for easier evaluation and display.

- Compatible with the OpenAI

Responsesschema: See OpenAI: Responses vs. ChatCompletion.

- Author agents using any framework: Wrap any existing agent using the

OpenAI Agents SDK

OpenAI Agents SDK

The template uses the OpenAI Agents SDK as the agent framework for conversation management and tool orchestration. You can author agents using any framework. The key is wrapping your agent with MLflow ResponsesAgent interface.

MCP (Model Context Protocol) servers

MCP (Model Context Protocol) servers

The template connects to Databricks MCP servers to give agents access to tools and data sources. See Model Context Protocol (MCP) on Databricks.

Author agents using AI coding assistants

Databricks recommends using AI coding assistants such as Claude, Cursor, and Copilot to author agents. Use the provided agent skills, in /.claude/skills, and the AGENTS.md file to help AI assistants understand the project structure, available tools, and best practices. Agents can automatically read those files to develop and deploy the Databricks Apps.

Step 3. Add tools to your agent

Give your agent capabilities like querying databases, searching documents, or calling external APIs by connecting it to MCP servers. The agent template includes a default MCP server connection. To add more tools, configure additional MCP servers in your agent code and grant the required permissions in databricks.yml.

See AI agent tools for supported tool types and code examples.

Define local Python function tools

For operations that don't require external data sources or APIs, define tools directly in your agent code. These tools run in the same process as your agent and are useful for data transformations, calculations, or utility operations.

- OpenAI Agents SDK

- LangGraph

Use the @function_tool decorator from the OpenAI Agents SDK:

from agents import Agent, function_tool

@function_tool

def get_current_time() -> str:

"""Get the current date and time."""

from datetime import datetime

return datetime.now().isoformat()

agent = Agent(

name="My agent",

instructions="You are a helpful assistant.",

model="databricks-claude-sonnet-4-5",

tools=[get_current_time],

)

Use the @tool decorator from LangChain:

from langchain_core.tools import tool

from langgraph.prebuilt import create_react_agent

from databricks_langchain import ChatDatabricks

@tool

def get_current_time() -> str:

"""Get the current date and time."""

from datetime import datetime

return datetime.now().isoformat()

agent = create_react_agent(

ChatDatabricks(endpoint="databricks-claude-sonnet-4-5"),

tools=[get_current_time],

)

Local function tools don't require resource grants in databricks.yml because they run within the agent process.

Step 4. Govern LLM usage from your agents on Databricks Apps with Unity AI Gateway

Route your agent's LLM calls through Unity AI Gateway (Beta) so every request is governed by the same controls regardless of which provider answers it. With the gateway in the request path, you can centralize permissions, attribute cost per app, swap models, and inspect or replay traffic without modifying agent code or rotating provider credentials.

This feature is in Beta. Workspace admins can control access to this feature from the Previews page. See Manage Databricks previews.

-

Enable Unity AI Gateway on your workspace. Unity AI Gateway is opt-in during Beta. An account admin must turn it on from the account console Previews page before you can create or query gateway endpoints. See Manage Databricks previews.

-

Point your agent at a Unity AI Gateway endpoint. In your agent code, pass the Unity AI Gateway endpoint name as the

modelargument and setuse_ai_gateway=Trueon the Databricks LLM client. The client routes traffic through the gateway and handles authentication automatically.- OpenAI

- LangGraph

Pythonfrom agents import Agent, set_default_openai_api, set_default_openai_client

from databricks_openai import AsyncDatabricksOpenAI

set_default_openai_client(AsyncDatabricksOpenAI(use_ai_gateway=True))

set_default_openai_api("chat_completions")

agent = Agent(

name="Agent",

instructions="You are a helpful assistant.",

model="<ai-gateway-endpoint>",

)Pythonfrom databricks_langchain import ChatDatabricks

llm = ChatDatabricks(

model="<ai-gateway-endpoint>",

use_ai_gateway=True,

)For additional API surfaces (OpenAI Responses API, Anthropic Messages API, Google Gemini) and REST examples, see Query Unity AI Gateway endpoints.

Advanced authoring topics

Streaming responses

Streaming responses

Streaming allows agents to send responses in real-time chunks instead of waiting for the complete response. To implement streaming with ResponsesAgent, emit a series of delta events followed by a final completion event:

- Emit delta events: Send multiple

output_text.deltaevents with the sameitem_idto stream text chunks in real-time. - Finish with done event: Send a final

response.output_item.doneevent with the sameitem_idas the delta events containing the complete final output text.

Each delta event streams a chunk of text to the client. The final done event contains the complete response text and signals Databricks to do the following:

- Trace your agent's output with MLflow tracing

- Aggregate streamed responses in Unity AI Gateway inference tables

- Show the complete output in the AI Playground UI

Streaming error propagation

Databricks propagates any errors encountered while streaming with the last token under databricks_output.error. It is up to the calling client to properly handle and surface this error.

{

"delta": …,

"databricks_output": {

"trace": {...},

"error": {

"error_code": BAD_REQUEST,

"message": "TimeoutException: Tool XYZ failed to execute."

}

}

}

Custom inputs and outputs

Custom inputs and outputs

Some scenarios might require additional agent inputs, such as client_type and session_id, or outputs like retrieval source links that should not be included in the chat history for future interactions.

For these scenarios, MLflow ResponsesAgent natively supports the fields custom_inputs and custom_outputs. You can access the custom inputs via request.custom_inputs in the framework examples above.

The Agent Evaluation review app does not support rendering traces for agents with additional input fields.

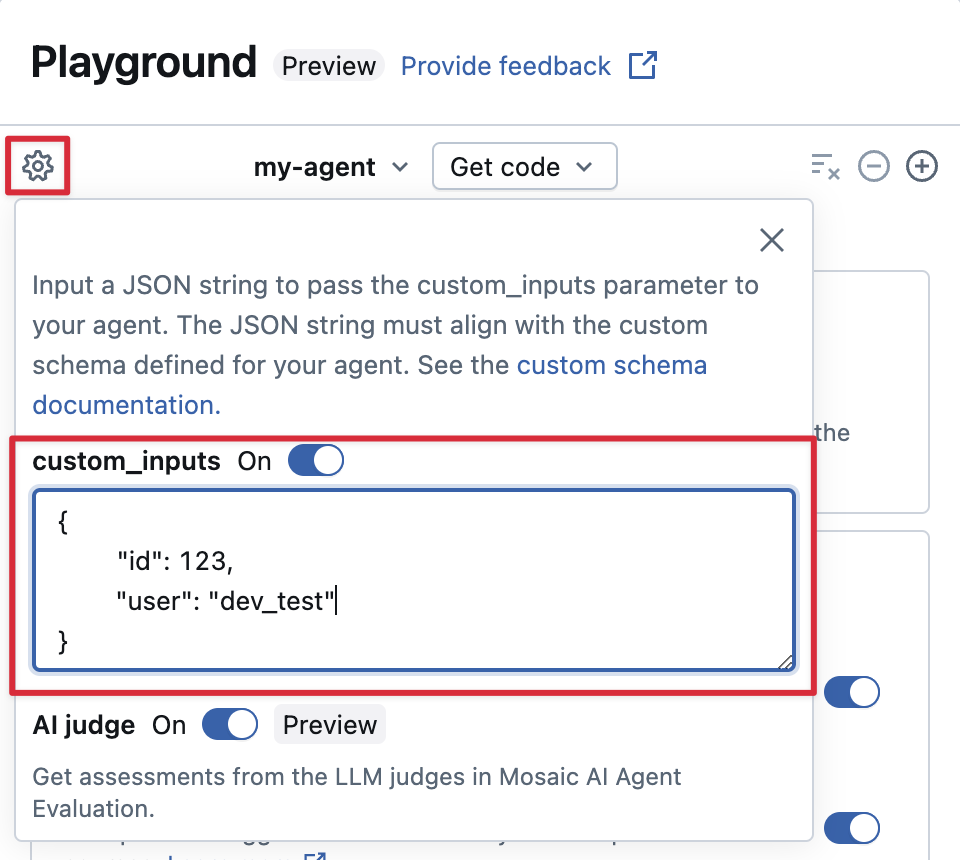

Provide custom_inputs in the AI Playground and review app

If your agent accepts additional inputs using the custom_inputs field, you can manually provide these inputs in both the AI Playground and the review app.

-

In either the AI Playground or the Agent Review App, select the gear icon

.

-

Enable custom_inputs.

-

Provide a JSON object that matches your agent's defined input schema.

Step 5. Run the agent app locally

Set up your local environment:

-

Install

uv(Python package manager),nvm(Node version manager), and the Databricks CLI:uvinstallationnvminstallation- Run the following to use Node 20 LTS:

Bash

nvm use 20 databricks CLIinstallation

-

Change directory to the

agent-openai-agents-sdkfolder. -

Run the provided quickstart scripts to install dependencies, set up your environment, and start the app.

Bashuv run quickstart

uv run start-app

In a browser, go to http://localhost:8000 to open the built-in chat UI and start chatting with the agent.

Step 6. Configure authentication

Your agent needs authentication to access Databricks resources. Databricks Apps provides two authentication methods: app authorization (service principal) and user authorization (on-behalf-of-user). You can configure either one through the workspace UI or declaratively in databricks.yml with Declarative Automation Bundles. The agent templates ship with a databricks.yml, so that path is the default when you start from a template.

For the complete reference, including all supported resource types, permission values, and an end-to-end databricks.yml walkthrough, see Authentication for AI agents.

- App authorization (default)

- User authorization

App authorization uses a service principal that Databricks automatically creates for your app. All users share the same permissions.

Declare every resource the agent uses under resources.apps.<app>.resources in databricks.yml. Deploy the bundle to grant the service principal the declared permissions:

resources:

apps:

agent_openai_agents_sdk:

name: 'agent-openai-agents-sdk'

source_code_path: ./

config:

command: ['uv', 'run', 'start-app']

env:

- name: MLFLOW_TRACKING_URI

value: 'databricks'

- name: MLFLOW_REGISTRY_URI

value: 'databricks-uc'

- name: MLFLOW_EXPERIMENT_ID

value_from: 'experiment'

resources:

- name: 'experiment'

experiment:

experiment_id: '<experiment-id>'

permission: 'CAN_EDIT'

- name: 'llm'

serving_endpoint:

name: 'databricks-claude-sonnet-4-5'

permission: 'CAN_QUERY'

databricks bundle deploy

databricks bundle run agent_openai_agents_sdk

For the full list of resource types, see App authorization.

User authorization lets your agent act with each user's individual permissions. Use this when you need per-user access control or audit trails.

Add this code to your agent:

from agent_server.utils import get_user_workspace_client

# In your agent code (inside @invoke or @stream)

user_workspace = get_user_workspace_client()

# Access resources with the user's permissions

response = user_workspace.serving_endpoints.query(name="my-endpoint", inputs=inputs)

Initialize get_user_workspace_client() inside your @invoke or @stream functions, not during app startup. User credentials only exist when handling a request.

Configure which Databricks APIs the agent can call on the user's behalf by adding scopes under user_api_scopes on the app in databricks.yml:

resources:

apps:

agent_openai_agents_sdk:

name: 'agent-openai-agents-sdk'

source_code_path: ./

user_api_scopes:

- sql

- dashboards.genie

- serving.serving-endpoints

databricks bundle deploy

databricks bundle run agent_openai_agents_sdk

For the list of available scopes and complete setup instructions, see User authorization.

Step 7. Evaluate the agent

The template includes agent evaluation code. See agent_server/evaluate_agent.py for more information. Evaluate the relevance and safety of your agent's responses by running the following in a terminal:

uv run agent-evaluate

Step 8. Deploy the agent to Databricks Apps

After configuring authentication, deploy your agent to Databricks. The agent templates use Databricks Asset Bundles (DABs) for deployment. The databricks.yml file in the template defines the app configuration and resource permissions. Ensure you have the Databricks CLI installed and configured.

If you created your app through the Workspace UI in Step 1, run databricks bundle deployment bind agent_openai_agents_sdk <app-name> --auto-approve before deploying to bind the existing app to your bundle. Otherwise, databricks bundle deploy fails with "An app with the same name already exists".

-

Validate the bundle configuration to catch errors before deploying:

Bashdatabricks bundle validate -

Deploy the bundle. This uploads your code and configures resources (MLflow experiment, serving endpoints, and so on) defined in

databricks.yml:Bashdatabricks bundle deploy -

Start or restart the app:

Bashdatabricks bundle run agent_openai_agents_sdknotebundle deployonly uploads files and configures resources.bundle runis required to start or restart the app with the new code.

For future updates, run databricks bundle deploy and then databricks bundle run agent_openai_agents_sdk to redeploy.

Step 9. Query the deployed agent

The following example uses a quick curl request with an OAuth token. Personal access tokens (PATs) are not supported for Databricks Apps.

For the full list of query methods, including the Databricks OpenAI Client and REST API, see Query an agent deployed on Databricks.

Generate an OAuth token using the Databricks CLI:

databricks auth login --host <https://host.databricks.com>

databricks auth token

Use the token to query the agent:

curl -X POST <app-url.databricksapps.com>/responses \

-H "Authorization: Bearer <oauth token>" \

-H "Content-Type: application/json" \

-d '{ "input": [{ "role": "user", "content": "hi" }], "stream": true }'

Understand model signatures to ensure compatibility with Databricks features

Databricks uses MLflow Model Signatures to define agents' input and output schema. Product features like the AI Playground assume that your agent has one of a set of supported model signatures.

If you follow the recommended approach to authoring agents using the ResponsesAgent interface, MLflow will automatically infer a signature for your agent that is compatible with Databricks product features.

Limitations

- Only medium and large compute sizes are supported. See Configure compute resources for a Databricks app.

- The MLflow Review App Chat UI does not currently support agents deployed on Databricks Apps. To evaluate existing traces, use labeling sessions, which work regardless of deployment method. Databricks is building review and feedback support directly into the chatbot template.

Next steps

Once your agent works in development, take it to production. See Productionize your Databricks Apps agent for the recommended sequence: CI/CD, load testing, then Unity AI Gateway.