Managed SaaS connectors

Databricks Lakeflow Connect provides fully-managed connectors for ingesting data from enterprise SaaS applications. Each connector handles source-specific authentication, incremental reads, schema evolution, and automated retries.

Supported connectors

-

- Confluence

- Ingest pages, spaces, and comments from Atlassian Confluence.

-

- Microsoft Dynamics 365

- Ingest CRM and ERP data from Microsoft Dynamics 365 using Azure Synapse Link.

-

- Google Ads

- Ingest campaign performance and advertising metrics from Google Ads.

-

- Google Analytics

- Ingest website traffic and user behavior data from Google Analytics 4.

-

- HubSpot

- Ingest CRM, marketing, and sales activity data from HubSpot.

-

- Jira

- Ingest project management and issue tracking data from Atlassian Jira.

-

- Meta Ads

- Ingest advertising campaign data from Meta (Facebook and Instagram Ads).

-

- NetSuite

- Ingest ERP and financial data from Oracle NetSuite.

-

- Salesforce

- Ingest CRM records and customer data from Salesforce using incremental reads.

-

- ServiceNow

- Ingest IT service management records and tickets from ServiceNow.

-

- SharePoint

- Ingest files and list data from Microsoft SharePoint sites.

-

- TikTok Ads

- Ingest advertising performance data from TikTok Ads.

-

- Workday HCM

- Ingest HR, payroll, and workforce data from Workday Human Capital Management.

-

- Workday Reports

- Ingest custom Workday reports into Delta tables.

-

- Zendesk Support

- Ingest support tickets, users, and organization data from Zendesk.

To share data with SAP Business Data Cloud (BDC), use the SAP BDC connector for Databricks, which uses Delta Sharing and not Lakeflow Connect. See Share data between SAP Business Data Cloud (BDC) and Databricks.

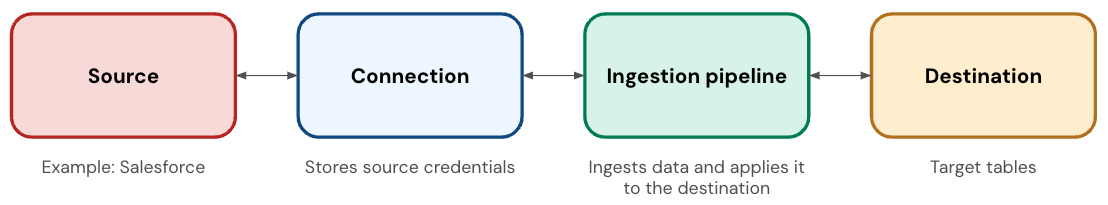

Connector components

A SaaS connector has the following components:

Component | Description |

|---|---|

Connection | A Unity Catalog securable object that stores authentication details for the application. |

Ingestion pipeline | A pipeline that copies the data from the application into the destination tables. The ingestion pipeline runs on serverless compute. |

Destination tables | The tables where the ingestion pipeline writes the data. These are streaming tables, which are Delta tables with extra support for incremental data processing. |