Tracing DeepSeek

MLflow Tracing provides automatic tracing capability for Deepseek models through the OpenAI SDK integration. Since DeepSeek uses an OpenAI-compatible API format, you can use mlflow.openai.autolog() to trace interactions with DeepSeek models.

import mlflow

mlflow.openai.autolog()

MLflow trace automatically captures the following information about DeepSeek calls:

- Prompts and completion responses

- Latencies

- Model name

- Additional metadata such as

temperature,max_tokens, if specified. - Function calling if returned in the response

- Any exception if raised

On serverless compute clusters, autologging is not automatically enabled. You must explicitly call mlflow.openai.autolog() to enable automatic tracing for this integration.

Prerequisites

To use MLflow Tracing with DeepSeek (using its OpenAI-compatible API), you need to install MLflow and the OpenAI SDK.

- Development

- Production

For development environments, install the full MLflow package with Databricks extras and openai:

pip install --upgrade "mlflow[databricks]>=3.1" openai

The full mlflow[databricks] package includes all features for local development and experimentation on Databricks.

For production deployments, install mlflow-tracing and openai:

pip install --upgrade mlflow-tracing openai

The mlflow-tracing package is optimized for production use.

MLflow 3 is highly recommended for the best tracing experience.

Before running the examples, you'll need to configure your environment:

For users outside Databricks notebooks: Set your Databricks environment variables:

export DATABRICKS_HOST="https://your-workspace.cloud.databricks.com"

export DATABRICKS_TOKEN="your-personal-access-token"

For users inside Databricks notebooks: These credentials are automatically set for you.

API Keys: Ensure your DeepSeek API key is configured. For production use, use AI Gateway or Databricks secrets instead of hardcoded values:

export DEEPSEEK_API_KEY="your-deepseek-api-key"

Supported APIs

MLflow supports automatic tracing for the following DeepSeek APIs through the OpenAI integration:

Chat Completion | Function Calling | Streaming | Async |

|---|---|---|---|

✅ | ✅ | ✅ (*1) | ✅ (*2) |

(*1) Streaming support requires MLflow 2.15.0 or later. (*2) Async support requires MLflow 2.21.0 or later.

To request support for additional APIs, please open a feature request on GitHub.

Basic Example

import openai

import mlflow

import os

# Ensure your DEEPSEEK_API_KEY is set in your environment

# os.environ["DEEPSEEK_API_KEY"] = "your-deepseek-api-key" # Uncomment and set if not globally configured

# Enable auto-tracing for OpenAI (works with DeepSeek)

mlflow.openai.autolog()

# Set up MLflow tracking to Databricks

mlflow.set_tracking_uri("databricks")

mlflow.set_experiment("/Shared/deepseek-demo")

# Initialize the OpenAI client with DeepSeek API endpoint and your key

client = openai.OpenAI(

base_url="https://api.deepseek.com",

api_key=os.environ.get("DEEPSEEK_API_KEY") # Or directly pass your key string

)

messages = [

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": "What is the capital of France?"},

]

response = client.chat.completions.create(

model="deepseek-chat",

messages=messages,

temperature=0.1,

max_tokens=100,

)

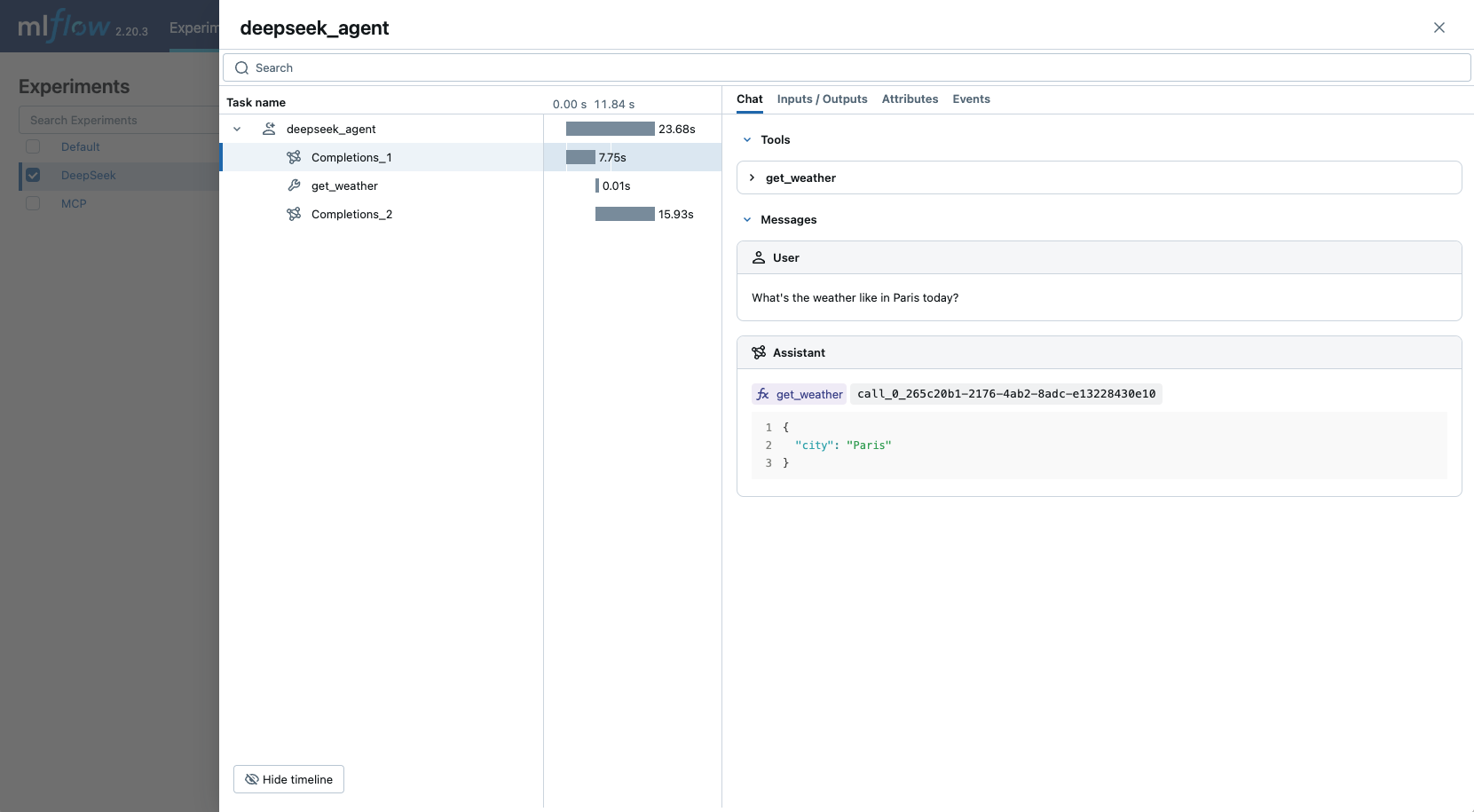

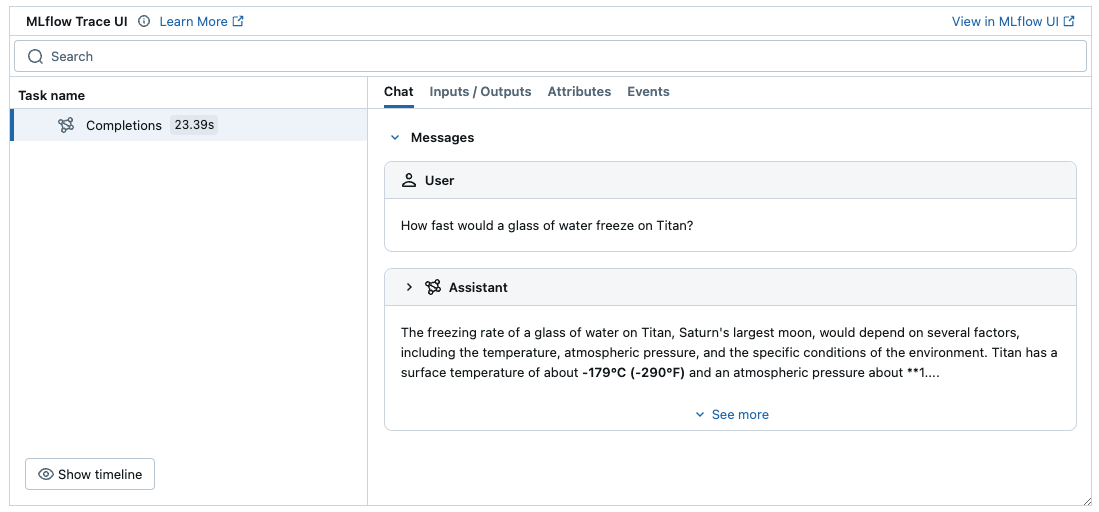

The above example should generate a trace in the experiment in the MLflow UI:

For production environments, use AI Gateway or Databricks secrets instead of hardcoded values for secure API key management.

Streaming and Async Support

MLflow supports tracing for streaming and async DeepSeek APIs. Visit the OpenAI Tracing documentation for example code snippets for tracing streaming and async calls through OpenAI SDK.

Advanced Example: Function Calling Agent

MLflow Tracing automatically captures function calling responses from DeepSeek models through the OpenAI SDK. The function instruction in the response will be highlighted in the trace UI. Moreover, you can annotate the tool function with the @mlflow.trace decorator to create a span for the tool execution.

The following example implements a simple function calling agent using DeepSeek Function Calling and MLflow Tracing.

import json

from openai import OpenAI

import mlflow

from mlflow.entities import SpanType

import os

# Ensure your DEEPSEEK_API_KEY is set in your environment

# os.environ["DEEPSEEK_API_KEY"] = "your-deepseek-api-key" # Uncomment and set if not globally configured

# Initialize the OpenAI client with DeepSeek API endpoint and your key

client = OpenAI(

base_url="https://api.deepseek.com",

api_key=os.environ.get("DEEPSEEK_API_KEY") # Or directly pass your key string

)

# Set up MLflow tracking to Databricks if not already configured

# mlflow.set_tracking_uri("databricks")

# mlflow.set_experiment("/Shared/deepseek-agent-demo")

# Assuming autolog is enabled globally or called earlier

# mlflow.openai.autolog()

# Define the tool function. Decorate it with `@mlflow.trace` to create a span for its execution.

@mlflow.trace(span_type=SpanType.TOOL)

def get_weather(city: str) -> str:

if city == "Tokyo":

return "sunny"

elif city == "Paris":

return "rainy"

return "unknown"

tools = [

{

"type": "function",

"function": {

"name": "get_weather",

"parameters": {

"type": "object",

"properties": {"city": {"type": "string"}},

},

},

}

]

_tool_functions = {"get_weather": get_weather}

# Define a simple tool calling agent

@mlflow.trace(span_type=SpanType.AGENT)

def run_tool_agent(question: str):

messages = [{"role": "user", "content": question}]

# Invoke the model with the given question and available tools

response = client.chat.completions.create(

model="deepseek-chat",

messages=messages,

tools=tools,

)

ai_msg = response.choices[0].message

messages.append(ai_msg)

# If the model request tool call(s), invoke the function with the specified arguments

if tool_calls := ai_msg.tool_calls:

for tool_call in tool_calls:

function_name = tool_call.function.name

if tool_func := _tool_functions.get(function_name):

args = json.loads(tool_call.function.arguments)

tool_result = tool_func(**args)

else:

raise RuntimeError("An invalid tool is returned from the assistant!")

messages.append(

{

"role": "tool",

"tool_call_id": tool_call.id,

"content": tool_result,

}

)

# Sent the tool results to the model and get a new response

response = client.chat.completions.create(

model="deepseek-chat", messages=messages

)

return response.choices[0].message.content

# Run the tool calling agent

question = "What's the weather like in Paris today?"

answer = run_tool_agent(question)

Disable auto-tracing

Auto tracing for DeepSeek (through OpenAI SDK) can be disabled globally by calling mlflow.openai.autolog(disable=True) or mlflow.autolog(disable=True).