Guiding principles for the lakehouse

Guiding principles are level-zero rules that define and influence your architecture. To build a data lakehouse that helps your business succeed now and in the future, consensus among stakeholders in your organization is critical.

Curate data and offer trusted data-as-products

Curating data is essential to creating a high-value data lake for BI and ML/AI. Treat data like a product with a clear definition, schema, and lifecycle. Ensure semantic consistency and that the data quality improves from layer to layer so that business users can fully trust the data.

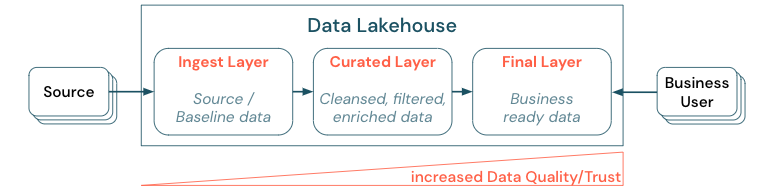

Curating data by establishing a layered (or multi-hop) architecture is a critical best practice for the lakehouse, as it allows data teams to structure the data according to quality levels and define roles and responsibilities per layer. A common layering approach is:

- Ingest layer: Source data gets ingested into the lakehouse into the first layer and should be persisted there. When all downstream data is created from the ingest layer, rebuilding the subsequent layers from this layer is possible, if needed.

- Curated layer: The purpose of the second layer is to hold cleansed, refined, filtered and aggregated data. The goal of this layer is to provide a sound, reliable foundation for analyses and reports across all roles and functions.

- Final layer: The third layer is created around business or project needs; it provides a different view as data products to other business units or projects, preparing data around security needs (for example, anonymized data), or optimizing for performance (with pre-aggregated views). The data products in this layer are seen as the truth for the business.

Pipelines across all layers need to ensure that data quality constraints are met, meaning that data is accurate, complete, accessible, and consistent at all times, even during concurrent reads and writes. The validation of new data happens at the time of data entry into the curated layer, and the following ETL steps work to improve the quality of this data. Data quality must improve as data progresses through the layers and, as such, the trust in the data subsequently increases from a business point of view.

Eliminate data silos and minimize data movement

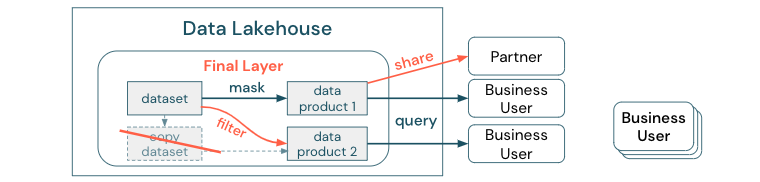

Don't create copies of a dataset with business processes relying on these different copies. Copies may become data silos that get out of sync, leading to lower quality of your data lake, and finally to outdated or incorrect insights. Also, for sharing data with external partners, use an enterprise sharing mechanism that allows direct access to the data in a secure way.

To make the distinction clear between a data copy versus a data silo: A standalone or throwaway copy of data is not harmful on its own. It is sometimes necessary for boosting agility, experimentation, and innovation. However, if these copies become operational with downstream business data products dependent on them, they become data silos.

To prevent data silos, data teams usually attempt to build a mechanism or data pipeline to keep all copies in sync with the original. Since this is unlikely to happen consistently, data quality eventually degrades. This can also lead to higher costs and a significant loss of user trust. On the other hand, several business use cases require data sharing with partners or suppliers.

An important aspect is to securely and reliably share the latest version of the dataset. Copies of the dataset are often not sufficient, because they can get out of sync quickly. Instead, data should be shared via enterprise data-sharing tools.

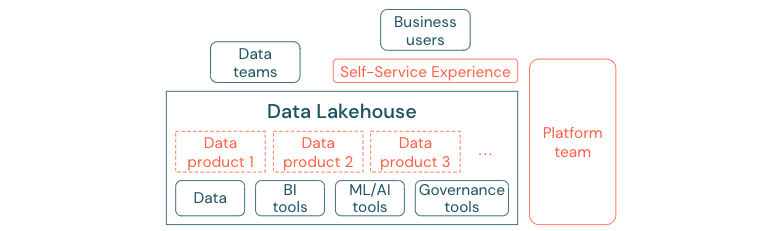

Democratize value creation through self-service

The best data lake cannot provide sufficient value, if users cannot access the platform or data for their BI and ML/AI tasks easily. Lower the barriers to accessing data and platforms for all business units. Consider lean data management processes and provide self-service access for the platform and the underlying data.

Businesses that have successfully moved to a data-driven culture will thrive. This means every business unit derives its decisions from analytical models or from analyzing its own or centrally provided data. For consumers, data has to be easily discoverable and securely accessible.

A good concept for data producers is “data as a product”: The data is offered and maintained by one business unit or business partner like a product and consumed by other parties with proper permission control. Instead of relying on a central team and potentially slow request processes, these data products must be created, offered, discovered, and consumed in a self-service experience.

However, it's not just the data that matters. The democratization of data requires the right tools to enable everyone to produce or consume and understand the data. For this, you need the data lakehouse to be a modern data and AI platform that provides the infrastructure and tooling for building data products without duplicating the effort of setting up another tool stack.

Adopt an organization-wide data and AI governance strategy

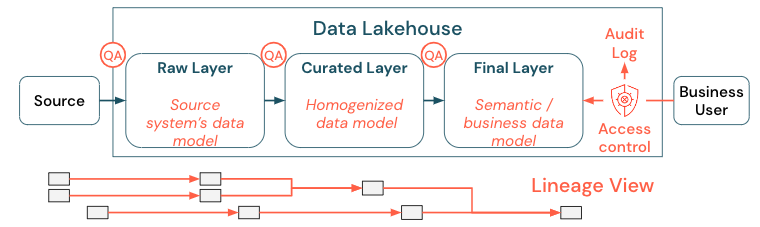

Data is a critical asset of any organization, but you cannot give everyone access to all data. Data access must be actively managed. Access control, auditing, and lineage-tracking are key for the correct and secure use of data.

Data governance is a broad topic. The lakehouse covers the following dimensions:

-

Data quality

The most important prerequisite for correct and meaningful reports, analysis results, and models is high-quality data. Quality assurance (QA) needs to exist around all pipeline steps. Examples of how to implement this include having data contracts, meeting SLAs, keeping schemas stable, and evolving them in a controlled way.

-

Data catalog

Another important aspect is data discovery: Users of all business areas, especially in a self-service model, must be able to discover relevant data easily. Therefore, a lakehouse needs a data catalog that covers all business-relevant data. The primary goals of a data catalog are as follows:

- Ensure the same business concept is uniformly called and declared across the business. You might think of it as a semantic model in the curated and the final layer.

- Track the data lineage precisely so that users can explain how these data arrived at their current shape and form.

- Maintain high-quality metadata, which is as important as the data itself for proper use of the data.

-

Access control

As the value creation from the data in the lakehouse happens across all business areas, the lakehouse must be built with security as a first-class citizen. Companies might have a more open data access policy or strictly follow the principle of least privileges. Independent of that, data access controls must be in place in every layer. It is important to implement fine-grade permission schemes from the very beginning (column- and row-level access control, role-based or attribute-based access control). Companies can start with less strict rules. But as the lakehouse platform grows, all mechanisms and processes for a more sophisticated security regime should already be in place. Additionally, all access to the data in the lakehouse must be governed by audit logs from the get-go.

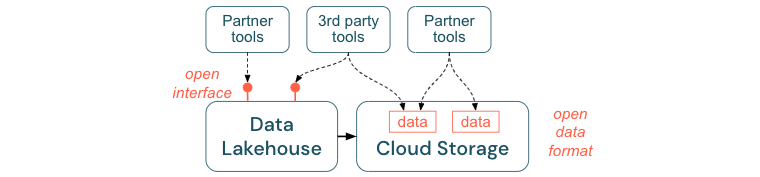

Encourage open interfaces and open formats

Open interfaces and data formats are crucial for interoperability between the lakehouse and other tools. It simplifies integration with existing systems and also opens up an ecosystem of partners who have integrated their tools with the platform.

Open interfaces are critical to enabling interoperability and preventing dependency on any single vendor. Traditionally, vendors built proprietary technologies and closed interfaces that limited enterprises in the way they can store, process and share data.

Building upon open interfaces helps you build for the future:

- It increases the longevity and portability of the data so that you can use it with more applications and for more use cases.

- It opens an ecosystem of partners who can quickly leverage the open interfaces to integrate their tools into the lakehouse platform.

Finally, by standardizing on open formats for data, total costs will be significantly lower; one can access the data directly on the cloud storage without the need to pipe it through a proprietary platform that can incur high egress and computation costs.

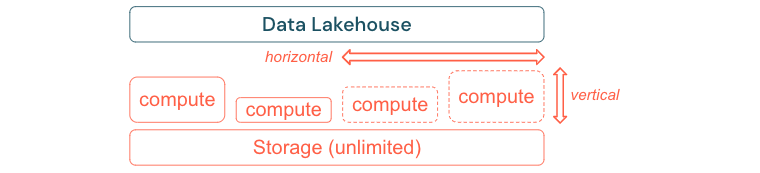

Build to scale and optimize for performance and cost

Data inevitably continues to grow and become more complex. To equip your organization for future needs, your lakehouse should be able to scale. For example, you should be able to add new resources easily on demand. Costs should be limited to the actual consumption.

Standard ETL processes, business reports, and dashboards often have a predictable resource need from a memory and computation perspective. However, new projects, seasonal tasks, or modern approaches like model training (churn, forecast, maintenance) generate peaks of resource need. To enable a business to perform all these workloads, a scalable platform for memory and computation is necessary. New resources must be added easily on demand, and only the actual consumption should generate costs. As soon as the peak is over, resources can be freed up again and costs reduced accordingly. Often, this is referred to as horizontal scaling (fewer or more nodes) and vertical scaling (larger or smaller nodes).

Scaling also enables businesses to improve the performance of queries by selecting nodes with more resources or clusters with more nodes. But instead of permanently providing large machines and clusters they can be provisioned on demand only for the time needed to optimize the overall performance to cost ratio. Another aspect of optimization is storage versus compute resources. Since there is no clear relation between the volume of the data and workloads using this data (for example, only using parts of the data or doing intensive calculations on small data), it is a good practice to settle on an infrastructure platform that decouples storage and compute resources.