Unity AI Gateway for agents and LLMs

This page covers the new AI Gateway (visible in the left nav of the UI), which is currently in Beta. Account admins can enable access to this feature in the account console Previews page. See Manage Databricks previews.

For details on the previous version of Unity AI Gateway, see Unity AI Gateway for serving endpoints.

What is Unity AI Gateway?

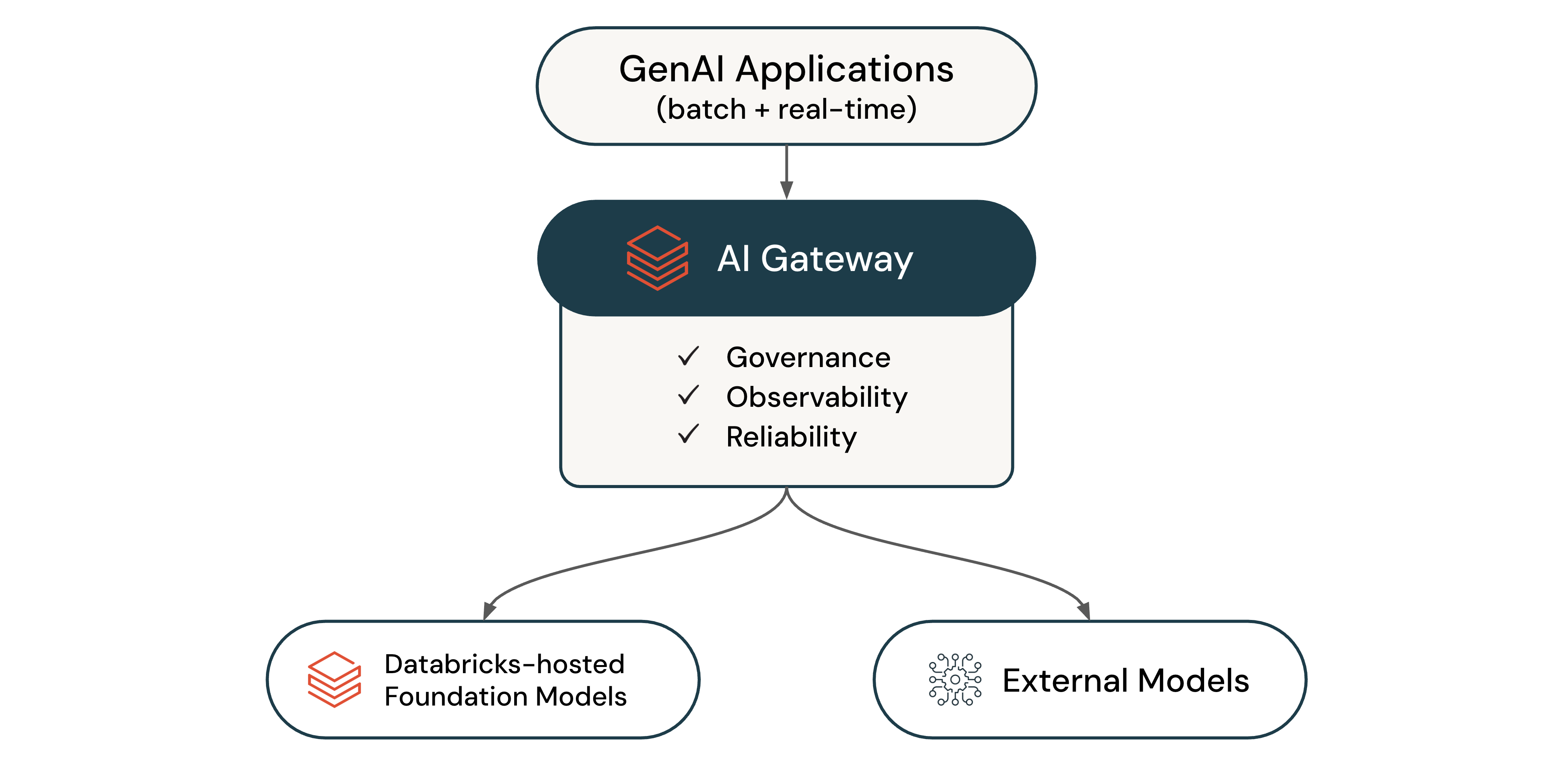

Unity AI Gateway is the enterprise control plane for governing LLM endpoints, agents, and coding tools. Use it to analyze usage, configure permissions, and manage capacity across providers.

With Unity AI Gateway, you can:

- Analyze how LLMs, agents, and coding tools are used in your organization

- Govern access to Databricks-hosted and external models

- Log LLM traffic across all endpoints to Unity Catalog

- Monitor endpoint health and provider availability

- Enforce rate limits and guardrails at the endpoint, user, or group level

- Attribute costs to specific endpoints, users, and teams

- Route traffic intelligently across providers for reliability and load balancing

- Split traffic across multiple model backends for scalability

- Switch providers and models without code changes

Supported features

The following table defines the available Unity AI Gateway features:

Feature | Description |

|---|---|

Permissions | Control who has access to your endpoints. |

Monitor usage and costs using system tables. | |

Monitor and audit requests and responses in Unity Catalog Delta tables. | |

Operational metrics | Monitor usage in real time. |

Enforce consumption limits at the endpoint, user, or group level. | |

Apply content filtering, sensitive data protection, and custom policies. | |

Analyze Databricks cost for Unity AI Gateway endpoints, destination models, principals, and tags in the billable usage system table and the usage dashboard. | |

Fallbacks | Increase reliability by routing to multiple providers when failures occur. |

Traffic splitting | Distribute traffic across multiple model backends for better scalability and load balancing. |

Custom APIs | Govern custom and external APIs with the same access controls, rate limits, and logging as LLM endpoints. |

Unity AI Gateway features don't incur charges during Beta.

Use Unity AI Gateway

Databricks provides Unity AI Gateway endpoints for popular LLMs. You can create new endpoints to govern agents, coding tools, and other applications.

To get started, see Configure Unity AI Gateway endpoints. To query endpoints, see Query Unity AI Gateway endpoints. To integrate coding agents like Cursor, Gemini CLI, Codex CLI, and Claude Code, see Integrate with coding agents. To route LLM calls from agents you author and deploy on Databricks Apps through Unity AI Gateway, see Step 4. Govern LLM usage from your agents on Databricks Apps with Unity AI Gateway.

Query quickstart

Tell Genie Code (Agent mode) to do this for you:

Create a new notebook that queries a :re[ai-gateway] endpoint using Python and the OpenAI client.

The following example shows how to query a Unity AI Gateway endpoint using Python and the OpenAI client:

from openai import OpenAI

import os

# To get a Databricks token, see https://docs.databricks.com/dev-tools/auth/pat

DATABRICKS_TOKEN = os.environ.get('DATABRICKS_TOKEN')

client = OpenAI(

api_key=DATABRICKS_TOKEN,

base_url="https://<workspace-url>/ai-gateway/mlflow/v1"

)

chat_completion = client.chat.completions.create(

messages=[

{"role": "user", "content": "Hello!"},

{"role": "assistant", "content": "Hello! How can I assist you today?"},

{"role": "user", "content": "What is Databricks?"},

],

model="databricks-gpt-5-2",

max_tokens=256

)

print(chat_completion.choices[0].message.content)

Replace <workspace-url> with your Databricks workspace URL.