June 2019

These features and Databricks platform improvements were released in June 2019.

Releases are staged. Your Databricks account may not be updated until up to a week after the initial release date.

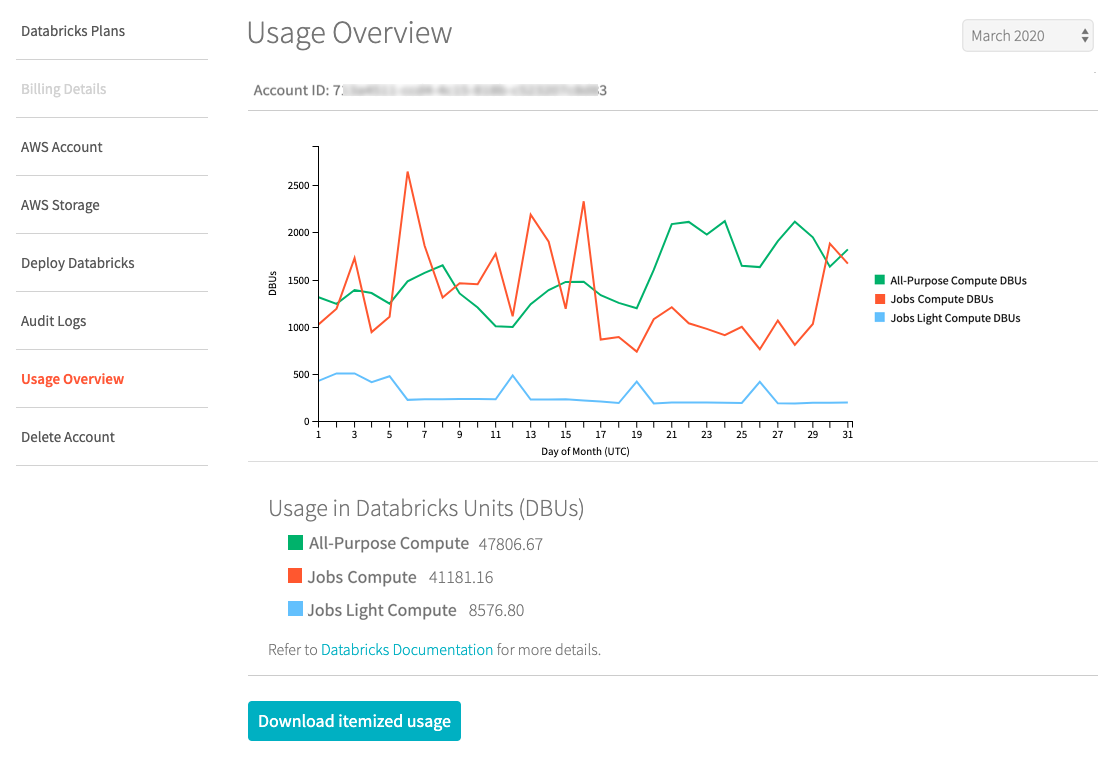

Account usage chart updated to display usage grouped by workload type

June 26, 2019

The usage graph has returned to the account console after a hiatus, with improvements. The new usage graph displays the exact number of DBUs consumed, grouped by workload type (product SKU): data analytics, data engineering, and data engineering light. The usage graph has been backfilled with prior month data.

In addition, starting 1 July, the usage CSV report downloadable from the Download itemized usage button will use the column name dbus instead of quantity.

See View billable usage (legacy).

RStudio integration no longer limited to high concurrency clusters

June 6 - 11, 2019: Version 2.99

Now you can enable RStudio Server on standard clusters in Databricks, in addition to the high-concurrency clusters that were already supported. Regardless of cluster mode, RStudio Server integration continues to require that you disable the automatic termination option for your cluster. See RStudio on Databricks.

MLflow 1.0

June 3, 2019

MLflow is an open source platform to manage the complete machine learning lifecycle. With MLflow, data scientists can track and share experiments locally or in the cloud, package and share models across frameworks, and deploy models virtually anywhere.

We are excited to announce the release of MLflow 1.0 today. The 1.0 release not only marks the maturity and stability of the APIs, but also adds a number of frequently requested features and improvements:

- The CLI was reorganized and now has dedicated commands for artifacts, models, db (the tracking database), and server (the tracking server).

- Tracking server search supports a simplified version of the

SQL WHEREclause. In addition to supporting run metrics and params, search has been enhanced to support some run attributes and user and system tags. - Adds support for x coordinates in the Tracking API. The MLflow UI visualization components now also supports plotting metrics against provided x-coordinate values.

- Adds a

runs/log-batchREST API endpoint as well as Python, R, and Java methods for logging multiple metrics, parameters, and tags with a single API request. - For tracking, the MLflow 1.0 client is now supported on Windows.

- Adds support for HDFS as an artifact store backend.

- Adds a command to build a Docker container whose default entry point serves the specified MLflow Python function model at port 8080 within the container.

- Adds an experimental ONNX model flavor.

You can view the full list of changes in the MLflow Change log.

Databricks Runtime 5.4 with Conda (Beta)

June 3, 2019

Databricks Runtime with Conda is in Beta. The contents of the supported environments may change in upcoming Beta releases. Changes can include the list of packages or versions of installed packages. Databricks Runtime 5.4 with Conda is built on top of Databricks Runtime 5.4 (EoS).

We're excited to introduce Databricks Runtime 5.4 with Conda, which lets you take advantage of Conda to manage Python libraries and environments. This runtime offers two root Conda environment options at cluster creation:

- Databricks Standard environment includes updated versions of many popular Python packages. This environment is intended as a drop-in replacement for existing notebooks that run on Databricks Runtime. This is the default Databricks Conda-based runtime environment.

- Databricks Minimal environment contains the minimum packages required for PySpark and Databricks Python notebook functionality. This environment is ideal if you want to customize the runtime with various Python packages.

See the complete release notes at Databricks Runtime 5.4 with Conda (EoS).

Databricks Runtime 5.4 for Machine Learning

June 3, 2019

Databricks Runtime 5.4 ML is built on top of Databricks Runtime 5.4 (EoS). It contains many popular machine learning libraries, including TensorFlow, PyTorch, Keras, and XGBoost, and provides distributed TensorFlow training using Horovod.

It includes the following new features:

- MLlib integration with MLflow (Public Preview).

- Hyperopt with new SparkTrials class pre-installed (Public Preview).

- HorovodRunner output sent from Horovod to the Spark driver node is now visible in notebook cells.

- XGBoost Python package pre-installed.

For details, see Databricks Runtime 5.4 for ML (EoS).

Databricks Runtime 5.4

June 3, 2019

Databricks Runtime 5.4 is now available. Databricks Runtime 5.4 includes Apache Spark 2.4.2, upgraded Python, R, Java, and Scala libraries, and the following new features:

- Delta Lake on Databricks adds Auto Optimize (Public Preview)

- Use your favorite IDE and notebook server with Databricks Connect

- Library utilities generally available

- Binary file data source

- AWS Glue metastore (Public Preview)

- Optimized DBFS FUSE mount for deep learning workloads

For details, see Databricks Runtime 5.4 (EoS).