SQL data type rules

Applies to: Databricks SQL

Databricks Runtime

Databricks uses several rules to resolve conflicts among data types:

- Promotion safely expands a type to a wider type.

- Implicit downcasting narrows a type. The opposite of promotion.

- Implicit crosscasting transforms a type into a type of another type family.

You can also explicitly cast between many types:

- cast function casts between most types, and returns errors if it cannot.

- try_cast function works like cast function but returns NULL when passed invalid values.

- Other builtin functions cast between types using provided format directives.

Type promotion

Type promotion is the process of casting a type into another type of the same type family which contains all possible values of the original type.

Therefore type promotion is a safe operation. For example TINYINT has a range from -128 to 127. All its possible values can be safely promoted to INTEGER.

Type precedence list

The type precedence list defines whether values of a given data type can be implicitly promoted to another data type.

Data type | Precedence list (from narrowest to widest) |

|---|---|

TINYINT -> SMALLINT -> INT -> BIGINT -> DECIMAL -> FLOAT (1) -> DOUBLE | |

SMALLINT -> INT -> BIGINT -> DECIMAL -> FLOAT (1) -> DOUBLE | |

INT -> BIGINT -> DECIMAL -> FLOAT (1) -> DOUBLE | |

BIGINT -> DECIMAL -> FLOAT (1) -> DOUBLE | |

DECIMAL -> FLOAT (1) -> DOUBLE | |

FLOAT (1) -> DOUBLE | |

DOUBLE | |

DATE -> TIMESTAMP | |

TIME (4) | |

TIMESTAMP | |

ARRAY (2) | |

BINARY | |

BOOLEAN | |

INTERVAL | |

GEOGRAPHY(ANY) | |

GEOMETRY(ANY) | |

MAP (2) | |

STRING | |

STRUCT (2) | |

VARIANT | |

OBJECT (3) |

(1) For least common type resolution FLOAT is skipped to avoid loss of precision.

(2) For a complex type the precedence rule applies recursively to its component elements.

(3) OBJECT exists only within a VARIANT.

(4) The least common type of TIME(n) and TIME(m) is TIME(max(n, m)). TIME does not promote to TIMESTAMP or any other type.

Strings and NULL

Special rules apply for STRING and untyped NULL:

NULLcan be promoted to any other type.STRINGcan be promoted toBIGINT,BINARY,BOOLEAN,DATE,DOUBLE,INTERVAL,TIME, andTIMESTAMP. If the actual string value cannot be cast to least common type Databricks raises a runtime error. When promoting toINTERVALthe string value must match the intervals units.

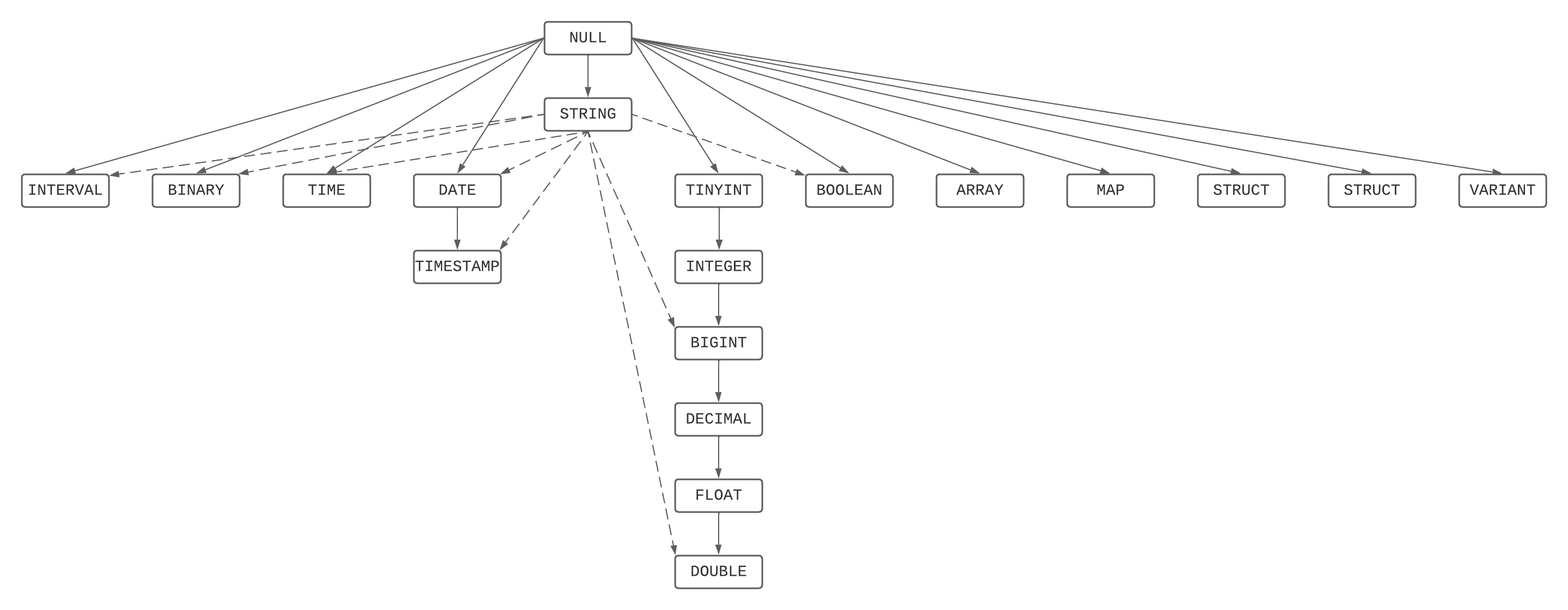

Type precedence graph

This is a graphical depiction of the precedence hierarchy, combining the type precedence list and strings and NULLs rules.

Least common type resolution

The least common type from a set of types is the narrowest type reachable from the type precedence graph by all elements of the set of types.

The least common type resolution is used to:

- Decide whether a function that expects a parameter of a given type can be invoked using an argument of a narrower type.

- Derive the argument type for a function that expects a shared argument type for multiple parameters, such as coalesce, in, least, or greatest.

- Derive the operand types for operators such as arithmetic operations or comparisons.

- Derive the result type for expressions such as the case expression.

- Derive the element, key, or value types for array and map constructors.

- Derive the result type of UNION, INTERSECT, or EXCEPT set operators.

Parameterized types

Some data types carry parameters that affect their precision or scale. When the least common type involves parameterized types, the result parameters are computed so that values of both input types can be represented without loss.

DECIMAL(p, s)

The least common type of DECIMAL(p1, s1) and DECIMAL(p2, s2) is a DECIMAL whose scale and precision accommodate all values from both types:

resultScale = max(s1, s2)

maxIntegerDigits = max(p1 - s1, p2 - s2)

resultPrecision = min(38, resultScale + maxIntegerDigits)

If resultScale + maxIntegerDigits exceeds 38 (the maximum DECIMAL precision), the precision is capped at 38 and the scale is reduced to preserve integer digits.

For example, the least common type of DECIMAL(10, 2) and DECIMAL(12, 5) is DECIMAL(15, 5) because max(2, 5) = 5 scale digits and max(8, 7) = 8 integer digits require a precision of 13, widened to 15 to hold 8 + 5 = 13 significant digits with 5 decimal places.

TIME(p)

The least common type of TIME(n) and TIME(m) is TIME(max(n, m)).

For example, the least common type of TIME(0) and TIME(6) is TIME(6).

Additional rules

Special rules are applied if the least common type resolves to FLOAT. If any of the contributing types is an exact numeric type (TINYINT, SMALLINT, INTEGER, BIGINT, or DECIMAL) the least common type is pushed to DOUBLE to avoid potential loss of digits.

When the least common type is a STRING the collation is computed following the collation precedence rules.

Implicit downcasting and crosscasting

Databricks employs these forms of implicit casting only on function and operator invocation, and only where it can unambiguously determine the intent.

-

Implicit downcasting

Implicit downcasting automatically casts a wider type to a narrower type without requiring you to specify the cast explicitly. Downcasting is convenient, but it carries the risk of unexpected runtime errors if the actual value fails to be representable in the narrow type.

Downcasting applies the type precedence list in reverse order. The

GEOGRAPHYandGEOMETRYdata types are never downcast.

-

Implicit crosscasting

Implicit crosscasting casts a value from one type family to another without requiring you to specify the cast explicitly.

Databricks supports implicit crosscasting from:

- Any simple type, except

BINARY,GEOGRAPHY, andGEOMETRY, toSTRING. - A

STRINGto any simple type, exceptGEOGRAPHYandGEOMETRY.

- Any simple type, except

Casting on function invocation

Given a resolved function or operator, the following rules apply, in the order they are listed, for each parameter and argument pair:

-

If a supported parameter type is part of the argument's type precedence graph, Databricks promotes the argument to that parameter type.

In most cases the function description explicitly states the supported types or chain, such as “any numeric type”.

For example, sin(expr) operates on

DOUBLEbut will accept any numeric. -

If the expected parameter type is a

STRINGand the argument is a simple type Databricks crosscasts the argument to the string parameter type.For example, substr(str, start, len) expects

strto be aSTRING. Instead, you can pass a numeric or datetime type. -

If the argument type is a

STRINGand the expected parameter type is a simple type, Databricks crosscasts the string argument to the widest supported parameter type.For example, date_add(date, days) expects a

DATEand anINTEGER.If you invoke

date_add()with twoSTRINGs, Databricks crosscasts the firstSTRINGtoDATEand the secondSTRINGto anINTEGER. -

If the function expects a numeric type, such as an

INTEGER, or aDATEtype, but the argument is a more general type, such as aDOUBLEorTIMESTAMP, Databricks implicitly downcasts the argument to that parameter type.For example, a date_add(date, days) expects a

DATEand anINTEGER.If you invoke

date_add()with aTIMESTAMPand aBIGINT, Databricks downcasts theTIMESTAMPtoDATEby removing the time component and theBIGINTto anINTEGER. -

Otherwise, Databricks raises an error.

Examples

The coalesce function accepts any set of argument types as long as they share a least common type.

The result type is the least common type of the arguments.

-- The least common type of TINYINT and BIGINT is BIGINT

> SELECT typeof(coalesce(1Y, 1L, NULL));

BIGINT

-- INTEGER and DATE do not share a precedence chain or support crosscasting in either direction.

> SELECT typeof(coalesce(1, DATE'2020-01-01'));

Error: DATATYPE_MISMATCH.DATA_DIFF_TYPES

-- Both are ARRAYs and the elements have a least common type

> SELECT typeof(coalesce(ARRAY(1Y), ARRAY(1L)))

ARRAY<BIGINT>

-- The least common type of INT and FLOAT is DOUBLE

> SELECT typeof(coalesce(1, 1F))

DOUBLE

> SELECT typeof(coalesce(1L, 1F))

DOUBLE

> SELECT typeof(coalesce(1BD, 1F))

DOUBLE

-- The least common type between an INT and STRING is BIGINT

> SELECT typeof(coalesce(5, '6'));

BIGINT

-- The least common type is a BIGINT, but the value is not BIGINT.

> SELECT coalesce('6.1', 5);

Error: CAST_INVALID_INPUT

-- The least common type between a DECIMAL and a STRING is a DOUBLE

> SELECT typeof(coalesce(1BD, '6'));

DOUBLE

-- Two distinct explicit collations result in an error

> SELECT collation(coalesce('hello' COLLATE UTF8_BINARY,

'world' COLLATE UNICODE));

Error: COLLATION_MISMATCH.EXPLICIT

-- The resulting collation between two distinct implicit collations is indeterminate

> SELECT collation(coalesce(c1, c2))

FROM VALUES('hello' COLLATE UTF8_BINARY,

'world' COLLATE UNICODE) AS T(c1, c2);

NULL

-- The resulting collation between a explicit and an implicit collations is the explicit collation.

> SELECT collation(coalesce(c1 COLLATE UTF8_BINARY, c2))

FROM VALUES('hello',

'world' COLLATE UNICODE) AS T(c1, c2);

UTF8_BINARY

-- The resulting collation between an implicit and the default collation is the implicit collation.

> SELECT collation(coalesce(c1, 'world'))

FROM VALUES('hello' COLLATE UNICODE) AS T(c1, c2);

UNICODE

-- The resulting collation between the default collation and the indeterminate collation is the default collation.

> SELECT collation(coalesce(coalesce('hello' COLLATE UTF8_BINARY, 'world' COLLATE UNICODE), 'world'));

UTF8_BINARY

-- Least common type between GEOGRAPHY(srid) and GEOGRAPHY(ANY)

> SELECT typeof(coalesce(st_geogfromtext('POINT(1 2)'), to_geography('POINT(3 4)'), NULL));

geography(any)

-- Least common type between GEOMETRY(srid1) and GEOMETRY(srid2)

> SELECT typeof(coalesce(st_geomfromtext('POINT(1 2)', 4326), st_geomfromtext('POINT(3 4)', 3857), NULL));

geometry(any)

-- Least common type between GEOMETRY(srid1) and GEOMETRY(ANY)

> SELECT typeof(coalesce(st_geomfromtext('POINT(1 2)', 4326), to_geometry('POINT(3 4)'), NULL));

geometry(any)

-- Least common type between DECIMAL(10,2) and DECIMAL(12,5): precision accommodates both

> SELECT typeof(coalesce(CAST(1 AS DECIMAL(10,2)), CAST(1 AS DECIMAL(12,5))));

DECIMAL(15,5)

-- Least common type between TIME(0) and TIME(6) is the wider precision

> SELECT typeof(coalesce(TIME'10:30:00', CAST(TIME'10:30:00.123456' AS TIME(6))));

TIME(6)

The substring function expects arguments of type STRING for the string and INTEGER for the start and length parameters.

-- Promotion of TINYINT to INTEGER

> SELECT substring('hello', 1Y, 2);

he

-- No casting

> SELECT substring('hello', 1, 2);

he

-- Casting of a literal string

> SELECT substring('hello', '1', 2);

he

-- Downcasting of a BIGINT to an INT

> SELECT substring('hello', 1L, 2);

he

-- Crosscasting from STRING to INTEGER

> SELECT substring('hello', str, 2)

FROM VALUES(CAST('1' AS STRING)) AS T(str);

he

-- Crosscasting from INTEGER to STRING

> SELECT substring(12345, 2, 2);

23

|| (CONCAT) allows implicit crosscasting to string.

-- A numeric is cast to STRING

> SELECT 'This is a numeric: ' || 5.4E10;

This is a numeric: 5.4E10

-- A date is cast to STRING

> SELECT 'This is a date: ' || DATE'2021-11-30';

This is a date: 2021-11-30

date_add can be invoked with a TIMESTAMP or BIGINT due to implicit downcasting.

> SELECT date_add(TIMESTAMP'2011-11-30 08:30:00', 5L);

2011-12-05

date_add can be invoked with STRINGs due to implicit crosscasting.

> SELECT date_add('2011-11-30 08:30:00', '5');

2011-12-05