External tables

Applies to: Databricks SQL

Databricks Runtime

Unity Catalog and the built-in Databricks Hive metastore use default locations for managed tables. Unity Catalog introduces several new securable objects to grant privileges to data in cloud object storage.

-

A Unity Catalog object used to abstract long term credentials from cloud storage providers.

-

A Unity Catalog object used to associate a cloud object storage URI with a storage credential.

-

A Unity Catalog table created in a Unity Catalog-managed external location.

External table

An external table is a table that references an external storage path by using a LOCATION clause.

The storage path should be contained in an existing external location to which you have been granted access.

Alternatively you can reference a storage credential to which you have been granted access.

Using external tables abstracts away the storage path, external location, and storage credential for users who are granted access to the external table.

If a schema (database) is registered in your workspace-level Hive metastore, dropping that schema using the CASCADE option causes all files in that schema location to be deleted recursively, regardless of the table type (managed or external).

If the schema is registered to a Unity Catalog metastore, the files for Unity Catalog managed tables are deleted recursively. However, the files for external tables are not deleted. You must manage those files using the cloud storage provider directly.

Therefore, to avoid accidental data loss, you should never register a schema in a Hive metastore to a location with existing data. Nor should you create new external tables in a location managed by Hive metastore schemas or containing Unity Catalog managed tables.

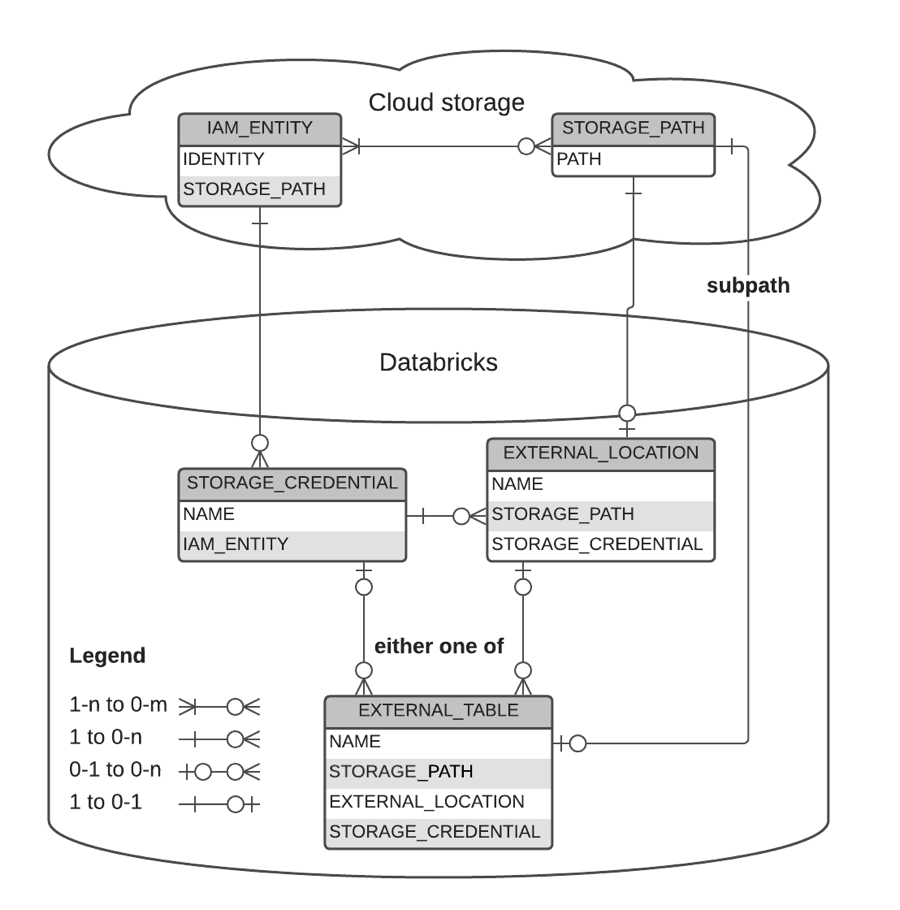

Graphical Representation of relationships

The following diagram describes the relationship between:

- storage credentials

- external locations

- external tables

- storage paths

- IAM entities

- Azure service accounts

Examples

-- `finance` can create an external table over specific object within the `finance_loc` location

> CREATE TABLE sec_filings LOCATION 's3://depts/finance/sec_filings';

-- Create or replace an external table from a query

> CREATE OR REPLACE TABLE sec_filings

LOCATION 's3://depts/finance/sec_filings'

AS (SELECT * FROM current_filings);

-- Cannot list files under an external table without permissions on it

> LIST `s3://depts/finance/sec_filings`

Error

> LIST `s3://depts/finance/sec_filings/_delta_log`

Error

-- Grant access to sec_filings to all employees

> GRANT SELECT ON TABLE sec_filings TO employee;

-- Any member of the `employee` group can securely read sec_filings

> SELECT count(1) FROM sec_filings;

20

-- Any member of the `employee` group can list files under the sec_filings table

> LIST `s3://depts/finance/sec_filings`

_delta_log

> LIST `s3://depts/finance/sec_filings/_delta_log`

00000.json