Build agents on Databricks

This page provides an overview of tools for building, deploying, and managing AI agents on Databricks. To learn more about agents, see Agent system design patterns.

-

- Get started: no-code GenAI

- Try AI Playground for UI-based testing and prototyping.

-

- Get started: MLflow 3 for GenAI

- Try MLflow for GenAI tracing, evaluation, and human feedback.

Serve and query gen AI large language models (LLMs)

Serve a curated set of gen AI models from LLM providers such as OpenAI and Anthropic and make them available through secure, scalable APIs.

Feature | Description |

|---|---|

Serve gen AI models, including open source and third-party models such as Meta Llama, Anthropic Claude, OpenAI GPT, and more. |

Build and deploy enterprise-grade AI agents

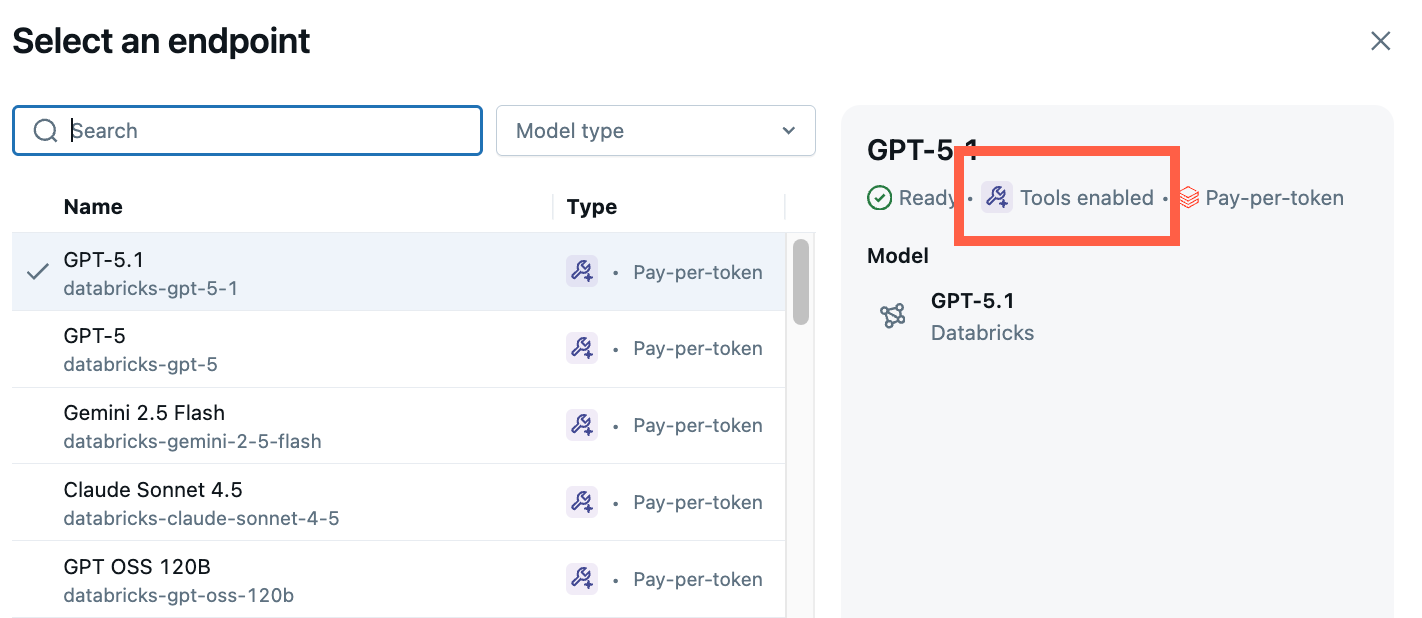

Build and deploy your own agents, including tool-calling agents, retrieval-augmented generation apps, and multi-agent systems. For a no-code starting point, use the AI Playground to select an LLM, add tools, and chat with the agent to test its responses before exporting to code.

Feature | Description |

|---|---|

Prototype and test AI agents in a no-code environment. Quickly experiment with agent behaviors and tool integrations before generating code for deployment. | |

Build and optimize domain-specific AI chatbots using an intuitive interface. | |

Author, deploy, and evaluate agents using Python. Supports agents written with any authoring library, including LangGraph, LangChain, OpenAI, and LlamaIndex. Integrated with MLflow Tracing. Iterate quickly using Databricks Apps. To get started quickly, see Get started with AI agents. | |

Create agent tools to query structured and unstructured data, run code, or connect to external service APIs. | |

Standardize how agents connect to data and tools with a secure, consistent interface. |

Evaluate, debug, and optimize agents

Track agent performance, collect feedback, and drive quality improvements with evaluation and tracing tools.

Feature | Description |

|---|---|

Use MLflow Tracing for end-to-end observability. Log every step your agent takes to debug, monitor, and audit agent behavior in development and production. | |

Use Agent Evaluation and MLflow to measure quality, cost, and latency. Collect feedback from stakeholders and subject matter experts through built-in review apps and use LLM judges to identify and resolve quality issues. | |

Use the same evaluation configuration (LLM judges and custom metrics) in offline evaluation and online monitoring. |