Classification with AutoML

Use AutoML to automatically find the best classification algorithm and hyperparameter configuration to predict the label or category of a given input.

Set up classification experiment with the UI

You can set up a classification problem using the AutoML UI with the following steps:

-

In the sidebar, select Experiments.

-

In the Classification card, select Start training.

The Configure AutoML experiment page displays. On this page, you configure the AutoML process, specifying the dataset, problem type, target or label column to predict, metric to use to evaluate and score the experiment runs, and stopping conditions.

-

In the Compute field, select a cluster running Databricks Runtime ML.

-

Under Dataset, select Browse.

-

Navigate to the table you want to use and click Select. The table schema appears.

- In Databricks Runtime 10.3 ML and above, you can specify which columns AutoML should use for training. You cannot remove the column selected as the prediction target or the time column to split the data.

- In Databricks Runtime 10.4 LTS ML and above, you can specify how null values are imputed by selecting from the Impute with dropdown. By default, AutoML selects an imputation method based on the column type and content.

noteIf you specify a non-default imputation method, AutoML does not perform semantic type detection.

-

Click in the Prediction target field. A drop-down appears listing the columns shown in the schema. Select the column you want the model to predict.

-

The Experiment name field shows the default name. To change it, type the new name in the field.

You can also:

- Specify additional configuration options.

- Use existing feature tables in Feature Store to augment the original input dataset.

Advanced configurations

Open the Advanced Configuration (optional) section to access these parameters.

- The evaluation metric is the primary metric used to score the runs.

- In Databricks Runtime 10.4 LTS ML and above, you can exclude training frameworks from consideration. By default, AutoML trains models using frameworks listed under AutoML algorithms.

- You can edit the stopping conditions. Default stopping conditions are:

- For forecasting experiments, stop after 120 minutes.

- In Databricks Runtime 10.4 LTS ML and below, for classification and regression experiments, stop after 60 minutes or after completing 200 trials, whichever happens first. For Databricks Runtime 11.0 ML and above, the number of trials is not used as a stopping condition.

- In Databricks Runtime 10.4 LTS ML and above, for classification and regression experiments, AutoML incorporates early stopping; it stops training and tuning models if the validation metric is no longer improving.

- In Databricks Runtime 10.4 LTS ML and above, you can select a

time columnto split the data for training, validation, and testing in chronological order (applies only to classification and regression). - Databricks recommends leaving the Data directory field empty. Not populating this field triggers the default behavior of securely storing the dataset as an MLflow artifact. A DBFS path can be specified, but in this case, the dataset does not inherit the AutoML experiment's access permissions.

Run the experiment and monitor the results

To start the AutoML experiment, click Start AutoML. The experiment starts to run, and the AutoML training page appears. To refresh the runs table, click .

View experiment progress

From this page, you can:

- Stop the experiment at any time.

- Open the data exploration notebook.

- Monitor runs.

- Navigate to the run page for any run.

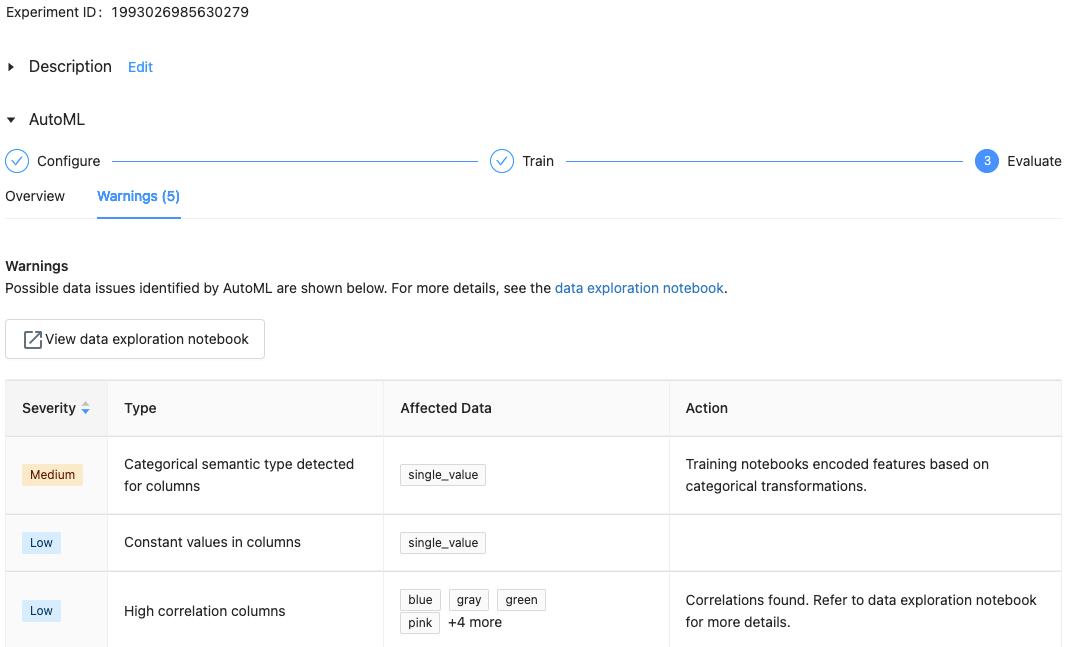

With Databricks Runtime 10.1 ML and above, AutoML displays warnings for potential issues with the dataset, such as unsupported column types or high cardinality columns.

Databricks does its best to indicate potential errors or issues. However, this may not be comprehensive and may not capture the issues or errors you may be searching for.

To see any warnings for the dataset, click the Warnings tab on the training page or the experiment page after the experiment completes.

View results

When the experiment completes, you can:

- Register and deploy one of the models with MLflow.

- Select View notebook for best model to review and edit the notebook that created the best model.

- Select View data exploration notebook to open the data exploration notebook.

- Search, filter, and sort the runs in the runs table.

- See details for any run:

- The generated notebook containing source code for a trial run can be found by clicking into the MLflow run. The notebook is saved in the Artifacts section of the run page. You can download this notebook and import it into the workspace, if downloading artifacts is enabled by your workspace administrators.

- To view the run results, click in the Models column or the Start Time column. The run page appears, showing information about the trial run (such as parameters, metrics, and tags) and artifacts created by the run, including the model. This page also includes code snippets that you can use to make predictions with the model.

To return to this AutoML experiment later, find it in the table on the Experiments page. The results of each AutoML experiment, including the data exploration and training notebooks, are stored in a databricks_automl folder in the home folder of the user who ran the experiment.

Register and deploy a model

Register and deploy your model using the AutoML UI. When a run completes, the top row shows the best model based on the primary metric.

- Select the link in the Models column for the model you want to register.

- Select

to register it to Unity Catalog or Model Registry.

noteDatabricks recommends you register models to Unity Catalog for the latest features.

- After registration, you can deploy the model to a custom model serving endpoint.

No module named 'pandas.core.indexes.numeric

When serving a model built using AutoML with Model Serving, you may get the error: No module named 'pandas.core.indexes.numeric.

This is due to an incompatible pandas version between AutoML and the model serving endpoint environment. You can resolve this error by running the add-pandas-dependency.py script. The script edits the requirements.txt and conda.yaml for your logged model to include the appropriate pandas dependency version: pandas==1.5.3

- Modify the script to include the

run_idof the MLflow run where your model was logged. - Re-register the model to Unity Catalog or the model registry.

- Try serving the new version of the MLflow model.